No more connections can be made to this remote computer at this time because there are already as many connections as the computer can accept. When more than the allowed limit of users or systems try to connect, the connection is denied and the client sees one of the following error messages: 10 users: Windows NT, 2000, XP Professional, Vista Home Premium/Business/Enterprise/Ultimate.5 users: Windows XP Home, Vista Starter/Home Basic.This limit is normally unchangeable and is fixed in the specific version and edition of Windows as follows: This limit is expressed as the “Maximum Logged On Users” and can be seen by issuing the NET CONFIG SERVER command at a command prompt. This means that a maximum of 20 (or fewer) systems will be able to concurrently access shared files or printers on a given system. In addition, Microsoft imposes a limit of only 5, 10 or 20 concurrent client connections to computers running Windows. Although there is no theoretical limit to the size of a peer-to-peer network, performance, security, and access become a major headache on peer-based networks as the number of computers increases. Peer-to-peer networks can be as small as two computers or as large as hundreds of systems and devices.

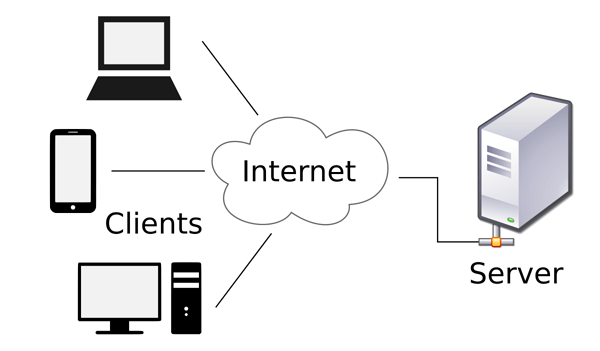

This is why you might hear about client and server activities, even when the discussion is about a peer-to-peer network. Essentially, every computer on a peer-to-peer network can function as both a server and a client any computer on a peer-to-peer network is considered a server if it shares a printer, a folder, a drive, or some other resource with the rest of the network. For demanding computing tasks, several servers can act as a single unit through the use of parallel processing.īy contrast, on a peer-to-peer network, every computer is equal and can communicate with any other computer on the network to which it has been granted access rights. When more than one server is used, each server can “specialize” in a particular task (file server, print server, fax server, email server, and so on) or provide redundancy (duplicate servers) in case of server failure. These resources can reside on a single server or on a group of servers. Servers often run a special network OS-such as Windows Server, Linux, or UNIX-that is designed solely to facilitate the sharing of its resources. High-performance servers typically use from two to eight processors (and that’s not counting multi-core CPUs), have many gigabytes of memory installed, and have one or more server-optimized network interface cards (NICs), RAID (Redundant Array of Independent Drives) storage consisting of multiple drives, and redundant power supplies. A dedicated server computer often has faster processors, more memory, and more storage space than a client because it might have to service dozens or even hundreds of users at the same time.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed